Part 9 – Emerging AI Abilities

Lessons from Nature

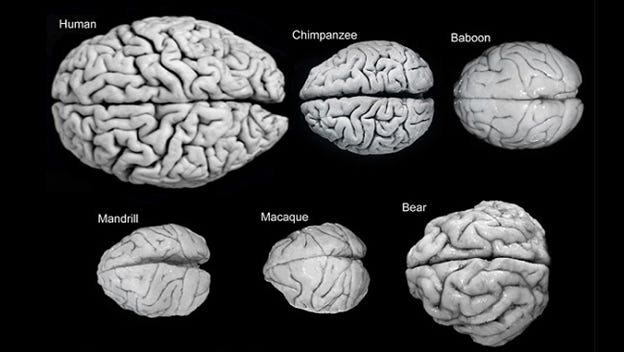

Primates evolved more complex brains by increasing the size of only a few regions, especially the cerebral cortex in humans. [2] New capabilities became possible in larger brains. Brains scale with body size. Primates have larger brains for a given body weight than other mammals. Among primates, human brains are the largest normalized for body weight. New capabilities emerged as primates evolved bigger brains, such as group hunting and social communication. Something similar has happened with deep learning networks as they increased in size and complexity.

The amount of computing power needed to solve problems in vision and language was inconceivable to us when neural network learning algorithms were pioneered in the 1980s. Forty years ago, we did not know how well neural network models would scale, how much scaling would be needed to solve real-world problems, or how long it would take. We believed they could scale because cognitive capabilities increased as the cerebral cortex of primates expanded, a proof of principle for scaling

How an algorithm scales with the size of a problem is a general principle in AI, mathematics, science, and engineering. It can determine whether an approach to solving a problem can be accomplished with current computers or is hopelessly impractical. As digital computers became millions of times more powerful over the last 40 years, thresholds were reached where new capabilities became possible. Today, the largest transformers have trillions of weights, or parameters, learned by training on texts with trillions of words, and as they grew larger, new capabilities emerged that were not expected.

Performance Improves with Network Size

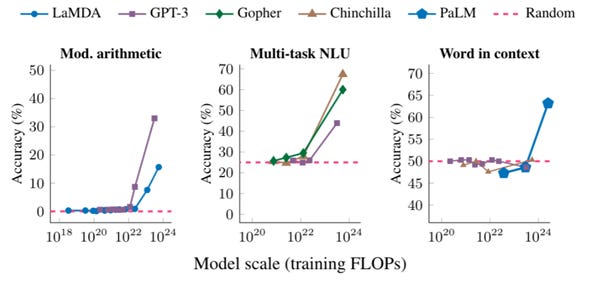

This is illustrated below for three tasks and five different transformer architectures. Performance for each task is essentially at chance until a threshold is reached, where the performance begins to rise with a steep slope. When a larger language model is released, new abilities emerge that no one anticipated, even more surprising than significant improvements for known tasks.

The ability to perform Left: multistep arithmetic, Center: succeed on college-level exams, and Right: identify the intended meaning of a word in context as a function of model scale. Performance emerges from random guessing (red dashed line) only for models of sufficiently large scale. Five models are shown with different color lines.[3]

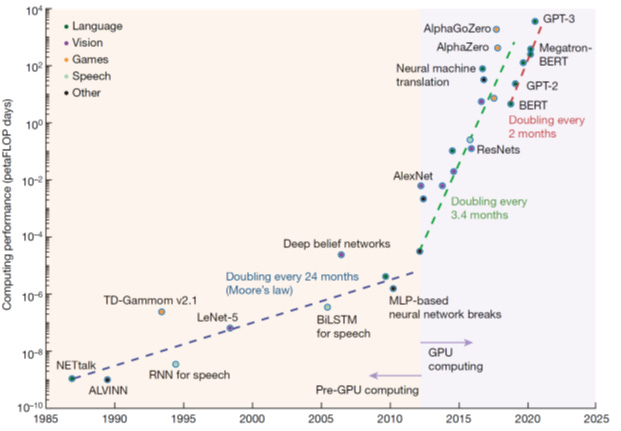

Over the last decade, there has been explosive computational growth. When GPUs were harnessed, there was a six-fold decrease in the doubling time at the inflection point in 2012 (see below). As computing power continued to increase, networks have grown in size. In 2020, GTP-3 had hundreds of billions of weights and was eclipsed in 2023 by GPT-4 with two trillion weights.

The amount of computation needed to process inputs by a neural network model, called inference, scales with the number of weights for a single central processor. However, it is independent of the number of brain synapses since they all work together in parallel. This is also why nature can get by with neurons and synapses working on millisecond times scales, a million times slower than silicon chips. Few algorithms scale this well with the size of the problem. As computing power continues to increase exponentially, it will reach the estimated computing power of human brains at some point in the not-too-distant future.

A petaFLOP is 1015 floating point operations per second (A floating point operation is a single arithmetic operation such as a multiply, divide, or addition). The vertical scale is in petaFLOP days. In 2020, GTP-3 required 1012 – a million million – times more computing power to train than NETtalk (my text-to-speech network model) in 1986. [4]

[1] https://www.brainfacts.org/brain-anatomy-and-function/evolution/2016/image-of-the-week-brains-of-the-animal-kingdom-060616

[2] The most ancient parts of the brain, and the most important for survival, are relatively small in humans and primates. For example, our hypothalamus, which regulates essential physiolgical functions, is proportionally smaller compared to other species with larger bodies.

[3] https://ai.googleblog.com/2022/11/characterizing-emergent-phenomena-in.html

[4] From: Mehonic, A., Kenyon, A.J. (2022). Brain-inspired computing needs a master plan. Nature 604, 255–260; Sources: Sevilla, J., Heim, L., Ho, A., Besiroglu, T., Hobbhahn, M., Villalobos, P. (2022). “Compute trends across three eras of machine learning”, arXiv, arXiv:2202.05924v2