The founders of AI in the 1950s were inspired by the potential of digital computers for creating a computer version of human intelligence. Computers were being programmed to play chess and prove mathematical theorems. How difficult could it be to program a computer to see? When the MIT AI lab received a government grant to build a robot that could play ping pong, they forgot to ask for money to write a vision program. So, they assigned it to a graduate student. Years later, I asked Marvin Minsky, who was the first director of the MIT AI Lab, whether this was a true story. He told me I had the facts wrong – they had assigned it to undergraduate students as a summer project:

AI researchers vastly underestimated the complexity of seeing because it is so effortless for us to recognize an object. We do this without thinking and do not have conscious access to all the subconscious processing that underlies seeing. Vision programs ballooned in complexity, which was very labor intensive, with no end in sight. Fifty years later, the field of computer vision shifted from reliance on logic to a geometric approach. Slow progress was possible but vision programs were still far from being able to recognize objects in images as well as a young child.

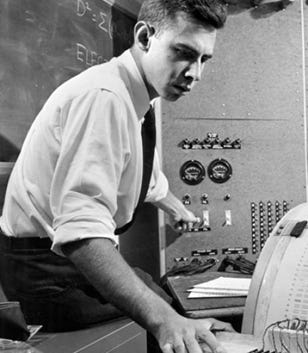

About the same time AI was launched, Frank Rosenblatt at Cornell University had a competing vision for how AI could play out. He invented a simple neural network he called the perceptron that he could train to distinguish between categories of images by judiciously changing the weights after each mistake. The perceptron only had one layer of weights, so it was quite limited in what it could discriminate. Still, he proved that his learning algorithm was guaranteed to find those weights if the perceptron could discriminate the images. There were two problems with this approach. First, simple arithmetic on computers at the time was very slow, and second, one layer of weights was limited in what could be discriminated. It was not until the 1980s that learning algorithms were found that could train multilayer perceptrons. [1]

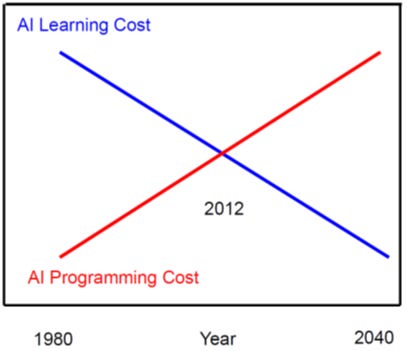

As the cost of computing fell dramatically and computers became powerful enough to train large neural networks, in 2012, Alexnet, with a dozen layers and trained on millions of images from thousands of categories, far outperformed the best vision programs. [2] This was a tipping point for computer vision. Learning from large datasets is now a much more efficient way to make progress on even more complex AI problems. Furthermore, the same neural architectures can be trained with domain data to achieve many other solutions to complex problems.

Traditional AI failed to fulfill its promises because the natural world has shades of gray that do not easily match the black-and-white of true-or-false logic. Neural network models handle uncertainties by learning probabilities from data and combining them to yield accurate predictions. In retrospect, we can see why symbol processing was such an attractive road for early AI. Digital computers are particularly efficient at representing symbols and performing logic, and language was the poster child for symbol processing. However, writing logical programs, even for language, suffered from the curse of dimensionality – an explosion in the number of possible combinations of things and situations that could occur in the world that have to be anticipated by the programmer.

Another shortcoming of writing programs for digital computers is that parallelizing programs to run on many computers simultaneously is challenging. Because neural network models are inherently parallel, they can take full advantage of massively parallel hardware. The modern engine for computing these probabilities is the graphics processing unit (GPU), which has many cores, each of which can work together in parallel. GPUs perform mathematical operations for fast graphics in gaming applications, the same operations as those needed in neural network models. Massive parallelizability is the ultimate reason why neural networks have been so successful. Another lesson is that the available hardware guides the search for new algorithms.

The emphasis on logical reasoning in traditional AI was also misleading. Learning to emulate sequences of logical steps, which mathematicians have mastered, requires a lot of training. Mathematicians may end up with rigorous proofs, but analogies guide their early exploration of mathematical problems. Subconscious processing and intuition are a source of creativity, from art to mathematics. [3] We make rational decisions in unfamiliar settings using analogies with familiar settings, not logic, which is too precise, and ChatGPT shows the same bias. [4] Nor do we know how we make most decisions. We later explain them with plausible rationalizations based on a few of the many factors that went into making the decision. AI researchers in the 20th century tried to write intelligent programs by relying on their intuition for “intelligent” behavior. ChatGPT’s behavior was not programmed but emerged by learning to predict future inputs.

Unlike digital computers, where the same hardware can run different software programs, the hardware is the software in brains. As we learn new things, we modify our “wetware.” Consequently, unlike digital computers, no two brains are identical. Reconstructing how neurons are connected and recording what they communicate will reveal the algorithms discovered by nature. Brains run many interacting algorithms [5]. The problem of integrating all the subsystems is alleviated because they are all built with neurons that can adapt to each other, as reflected in the rapid progress that has been made in building and integrating diverse multimodal neural network architectures to achieve the goals of AI.

The current generation of neural network models is based on a handful of basic principles inspired by brain architecture: start with a huge number of highly interconnected, nonlinear processing units, add a magic sauce — learning, and mix well with data. More computational principles from Nature will be discussed in a future post. [5]

[1] Sejnowski, T. J., The Deep Learning Revolution, Cambridge, MA: MIT Press (2018).

[2] Krizhevsky A, Sutskever I, Hinton GE ImageNet Classification with Deep Convolutional Neural Networks Proceedings of the 25th International Conference on Neural Information Processing Systems (Lake Tahoe, NV, Dec. 2012), 1097–1105.

[3] Ritter SM, Dijksterhuis A. (2014). Creativity-the unconscious foundations of the incubation period. Front Hum Neurosci., 8, 215.

[4] Dasgupta, I., Lampinen, A. K., Chan, S. C. Y., Creswell, A., Kumaran, D., McClelland, J. L., Hill, F. (2022) Language models show human-like content effects on reasoning arXiv:2207.07051

[5] Navlakha, S. (2018). Why Animal Extinction Is Crippling Computer Science: As the work of biologists and computer scientists converge, algorithmic secrets are increasingly found in nature, Wired Magazine. Sep 19, 2018, https://www.wired.com/story/why-animal-extinction-is-crippling-computer-science/

A pleasant surprise to see the photo of the young Frank Rosenblatt. He was a friend of the family, who I met at Cornell as an undergraduate. At the time I had no idea that his work was so pioneering. His unfortunate accident cut short his life. Thank you for your fascinating posts.

Images are tricky. Also I can now understand the problem of creating images. How do you explain color to someone who does not see in the traditional way? Fascinating post, thank you!